You know that feeling when you order food on DoorDash, and you don’t really think about the kitchen, the stove, the supply chain that grew the tomatoes, or the truck that delivered them? You just tap “order” and food appears. That’s how most of us interact with AI right now. You type a prompt into ChatGPT or Copilot, magic happens somewhere in a datacenter, and words appear on your screen.

But here’s the thing, there’s an absolute WAR happening in those kitchens right now. And it’s about to reshape the entire tech industry.

A few weeks ago, Microsoft dropped a bomb: Maia 200, their custom-built AI chip. Not a GPU they bought from NVIDIA. Not something off the shelf. A chip they designed from scratch, fabricated on TSMC’s 3nm process, with 140 billion transistors, running at 10+ petaFLOPS. It’s already deployed in datacenters near Des Moines, Iowa, running OpenAI’s GPT-5.2 models.

And my first reaction was: “Wait… Microsoft makes CHIPS now?”

Yeah. So does Google. So does Amazon. So does Meta. Welcome to the Silicon Wars, and nobody’s talking about it enough.

The Restaurant Analogy (Bear With Me)

Imagine you run the biggest restaurant chain in the world. Every single dish you serve requires one ultra-premium ingredient, let’s call it “Magic Salt.” There’s only one supplier of Magic Salt on the planet, and their name is NVIDIA. They charge whatever they want because where else are you gonna go? Their margins are 75-80%. You’re paying $4 for something that costs them $1 to make.

Now, when you were a small restaurant, you sucked it up. The Magic Salt was worth it. But now you’re serving billions of meals a day, and that markup is costing you BILLIONS of dollars a year. Every single plate of food, every single AI query, every Copilot suggestion in Microsoft Word, all of it needs Magic Salt.

So what do you do? You buy your own damn salt farm.

That’s literally what’s happening. Microsoft built Maia. Google has been building TPUs since 2015. Amazon built Trainium and Inferentia. They’re all saying “we’re done paying the NVIDIA tax”, at least for the workloads they can control.

But Why Can’t Everyone Just Use NVIDIA?

Okay, real talk. NVIDIA makes incredible hardware. The A100, H100, and B200 are engineering marvels. So why not just keep buying them?

Three reasons, and this is where it gets spicy:

First, the money. At hyperscaler scale, even small efficiency gains translate to billions. Microsoft claims Maia 200 delivers 30% better performance per dollar than their current fleet. When you’re running millions of chips, 30% savings is… a lot of fucking money. It’s like discovering your restaurant has been overpaying for salt by 30% on every dish for a decade.

Second, the supply chain. Right now, every tech giant on earth is fighting for the same limited pool of NVIDIA GPUs. Microsoft, Google, Amazon, Meta, Oracle, xAI, all of them in a bidding war, waiting in line at the same factory. Custom silicon gives you a parallel supply chain. You’re no longer entirely dependent on one company’s production schedule and pricing decisions.

Third, and this is the nerdy part, inference is different from training. NVIDIA’s GPUs are Swiss Army knives. They’re great at everything: training, inference, scientific computing, graphics. But that generality is expensive. For pure inference (which is what happens every time you send a prompt), you don’t need most of what makes an NVIDIA GPU pricey. Maia 200 strips away everything unnecessary and pours every transistor into the one job it needs to do: generate tokens fast and cheap. It’s like hiring a Formula 1 engineer to deliver pizzas versus just getting a really efficient delivery driver. Both get the pizza there, but one costs way less.

The CPU as Orchestra Conductor

Here’s where my brain really went “oh shit” when I started digging into this.

Your CPU, the Intel, AMD, or Qualcomm chip in your laptop, it doesn’t actually do the heavy AI math. It’s the conductor of an orchestra. It tells specialized hardware what to do and when to do it.

This pattern of “delegate to specialists” is as old as computing itself:

FPU (Floating Point Unit): Back in the 1980s, CPUs couldn’t even do decimal math. If you needed to calculate 3.14 × 2.71, you needed a separate chip called the FPU. It was literally a second chip you’d plug into your motherboard next to the CPU. It was so useful that eventually they just absorbed it into the CPU itself. The OG specialist who got hired full-time.

GPU (Graphics Processing Unit): Originally built to render video game pixels. Thousands of tiny cores doing simple math in parallel. Researchers in the 2000s realized “wait, neural networks are basically billions of simple math operations in parallel” and started hijacking GPUs for AI. NVIDIA noticed and leaned into it hard. The rest is history.

NPU (Neural Processing Unit): The new kid. This is a tiny AI accelerator built into your phone and laptop. Apple’s Neural Engine and Qualcomm’s Hexagon processor. It handles on-device stuff, face recognition, voice processing, and background blur on video calls, without needing the cloud. Think of it as Maia’s little cousin that lives on your phone.

TPU (Tensor Processing Unit): Google’s custom chip, the pioneer of this whole “build your own AI silicon” movement. The name comes from “tensor”, a multi-dimensional array of numbers, the fundamental data structure in deep learning. Every Google Search result, every Gemini response, runs on TPUs.

ASICs (Application-Specific Integrated Circuits): The umbrella term for all of the above. A chip designed to do ONE thing insanely well. Bitcoin miners use ASICs. TPUs are ASICs. Maia 200 is an ASIC. The tradeoff is always the same: you sacrifice flexibility for raw speed and efficiency at that one task.

So when you ask Copilot a question, here’s the orchestra playing: the CPU handles your request, figures out routing and authentication, packages up the prompt, ships it to a Maia 200 (or GPU), which crunches billions of matrix operations, spits back tokens, and the CPU reassembles the response and sends it to you. The CPU barely broke a sweat. But without it, Maia wouldn’t know what to do or who to talk to.

The CUDA Problem (Or: The World’s Most Powerful Lock-In)

Now here’s the plot twist that makes this whole story way more complicated.

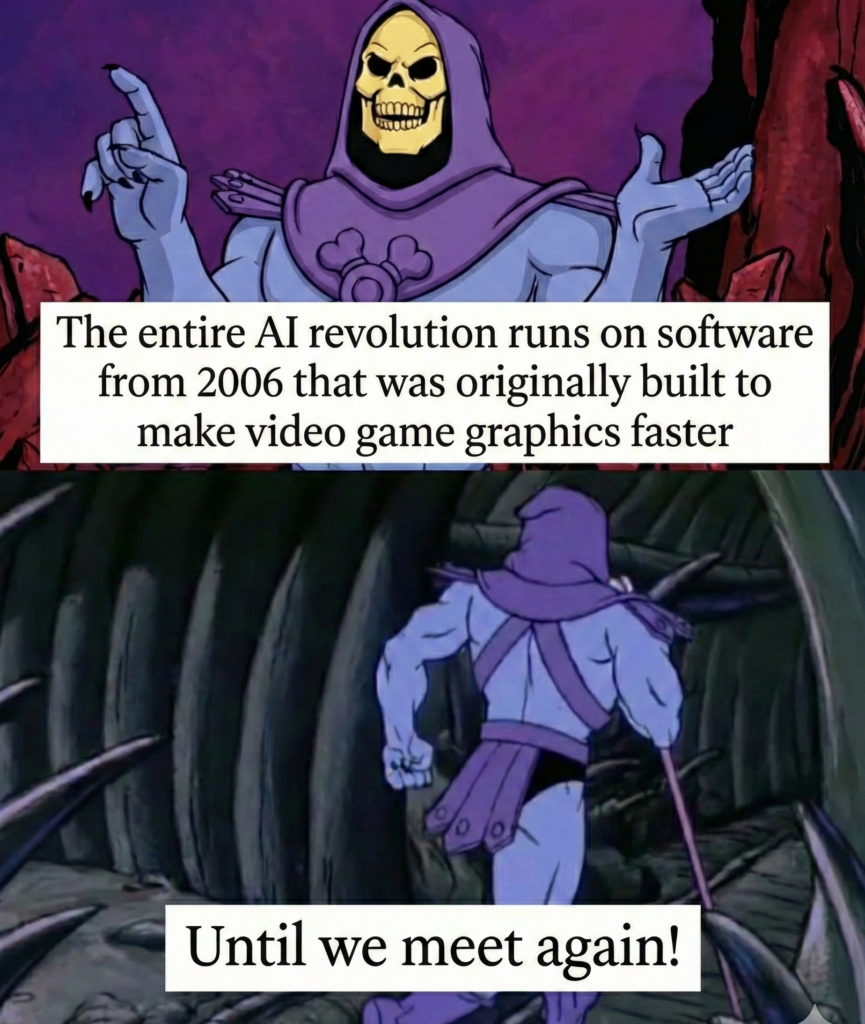

NVIDIA didn’t just build great hardware. They built CUDA, a software platform that lets developers write code that runs on NVIDIA GPUs. It launched in 2006, and over 20 years, it’s become the invisible foundation under ALL of modern AI.

When someone says “I trained a model in PyTorch,” what’s actually happening is: PyTorch calls cuDNN (a CUDA library), which calls CUDA kernels that execute on NVIDIA hardware. CUDA is the translator between human code and GPU silicon.

And it has a 20-year ecosystem, 4 million developers. Thousands of optimized libraries. Every AI paper, every university course, every startup prototype, all built on CUDA. It’s the QWERTY keyboard of AI computing. Even if alternatives are technically better, the ecosystem gravity is massive.

This is NVIDIA’s real power. Not the chips, the software. Building a competing chip is hard. Building a competing chip AND recreating 20 years of software ecosystem from scratch? That’s the actual boss fight.

That’s why Microsoft ships the Maia SDK with PyTorch support and a Triton compiler; they’re trying to meet developers where they already are. Google did the same with JAX and XLA for TPUs. Amazon did it with the Neuron SDK for Trainium. Everyone’s building bridges FROM the CUDA world TO their custom silicon, because asking developers to start from scratch is a death sentence.

The Dream: One Compiler to Rule Them All

Here’s the question that keeps popping into my head: if everyone is building different chips with different architectures, why not build an open-source universal compiler that takes your code and runs it on ANY hardware? Write once, run everywhere?

Turns out, this is the holy grail of AI infrastructure, and multiple serious efforts exist:

MLIR (from Google, now an LLVM project): designed as a universal intermediate layer that different hardware backends can plug into. This is probably the most credible long-term candidate.

OpenAI’s Triton: open source, lets you write GPU kernels in Python that compile to multiple hardware targets. Microsoft is literally using Triton for Maia 200.

OpenCL: was supposed to solve this 15 years ago. “Write once, run on any accelerator.” It technically worked, but optimized for portability over performance. Code that ran everywhere ran optimally nowhere. NVIDIA had zero incentive to make OpenCL fast on their hardware when CUDA was their lock-in strategy. It’s a cautionary tale.

The fundamental challenge? Each chip has a radically different architecture underneath. Writing one compiler that generates optimal code for all of them is like writing one recipe that tastes perfect whether you cook it on a gas stove, an electric oven, a microwave, or a campfire. You can make something that technically works on all of them, but it won’t be amazing on any of them.

The realistic future probably isn’t one universal compiler but a layered stack: you write in PyTorch (high level), it lowers to Triton or MLIR (intermediate), which compiles to hardware-specific backends (CUDA for NVIDIA, NPL for Maia, XLA for TPU). Each layer absorbs the complexity, so you care less about what’s underneath. CUDA doesn’t die; it becomes one of several backends that a smarter compiler targets for you.

The Edge Play: Where Qualcomm Enters the Chat

While Microsoft, Google, and Amazon fight over datacenter AI, there’s a completely different war happening in your pocket.

Qualcomm is betting that the future of AI isn’t in massive datacenter chips, it’s on your phone. Their Snapdragon chips pack a CPU, GPU, NPU, modem, and more onto one tiny chip that runs on a few watts. Compare that to Maia 200’s 750W power draw. Completely different philosophy.

The thesis: 80% of your AI interactions don’t need datacenter-scale compute. Autocomplete, photo enhancement, voice recognition, real-time translation, and basic Q&A with a small local model — all of this can run locally. No cloud round-trip, instant response, your data never leaves your device.

There are roughly 3-4 billion Snapdragon-powered devices in the world. If Qualcomm nails on-device AI, they become the largest deployed AI compute platform on earth. Not by FLOPS per chip, but by sheer number of devices running inference.

And here’s what gets me excited: on-device AI works in places with limited connectivity. It reduces the cost per query to near zero. It keeps user data private. It’s the democratization angle, AI that works for everyone, everywhere, not just people with fast internet and expensive cloud subscriptions.

NVIDIA’s Power Move: Turning Cell Towers into AI Data Centers

Just when you think the Silicon Wars are confined to datacenters, NVIDIA goes and flips the entire chessboard.

In October 2025, NVIDIA invested $1 billion in Nokia, yeah, THAT Nokia, for a 2.9% stake. But this isn’t some random portfolio investment. It’s a strategic play to put NVIDIA AI chips inside cell towers worldwide. The concept is called AI-RAN (AI Radio Access Network), and it’s genuinely wild.

Here’s the deal. Right now, cell towers are basically dumb pipes. Your phone sends data, the tower relays it, done. AI-RAN transforms those towers into mini AI data centers. The network can adjust signal strength based on crowd density, reroute traffic before congestion hits, and here’s the kicker, process AI requests locally, right at the tower, without sending your data all the way to a datacenter in Iowa.

Remember how we talked about Qualcomm bringing AI to the edge through your phone? NVIDIA is saying, “Why stop at the phone? Let’s make the NETWORK itself intelligent.” Every cell tower becomes a compute node. Nokia’s CEO called it “putting an AI data center into everyone’s pocket.”

Think about what this means. Today, when you use ChatGPT on your phone, your request travels through cell towers (dumb pipes) → across the internet → to a datacenter → hits a GPU or Maia chip → response travels all the way back. With AI-RAN, some of that processing happens right at the tower closest to you. Lower latency, less backbone traffic, and new revenue streams for telecom companies who’ve been struggling to monetize 5G.

And here’s the chess move that makes this so NVIDIA: by getting Nokia to build their next-gen base stations on NVIDIA’s platform, they’re locking in another massive market for their chips. It’s not just datacenters anymore. It’s not just laptops and phones. It’s the entire wireless infrastructure. NVIDIA is essentially trying to become the Intel Inside of… everything.

The AI-RAN market is expected to exceed $200 billion cumulative by 2030, and T-Mobile is already working with Nokia and NVIDIA to integrate this into its network. This is not a whitepaper; it’s being deployed.

Though there are skeptics. Some telecom operators argue that putting datacenter-grade GPUs in cell towers is overkill; one critic compared it to “powering a Vespa with a V8 engine.” The power consumption alone is a real concern when you’re managing thousands of tower sites. But NVIDIA’s betting that the AI workload demands will grow so fast that what seems like overkill today becomes necessary infrastructure tomorrow. Sound familiar? That’s the same bet they made on datacenter GPUs in 2016, and look how that turned out.

So, Where Does This Leave Us?

A year ago, I wrote about the personalized future of AI on-device processing, custom models, and the tension between cloud and edge. What I didn’t fully appreciate then was the silicon layer underneath, making all of it possible or impossible.

Here’s what I see now:

The chip IS the product strategy. When Microsoft builds Maia, they’re not making a chip; they’re making Copilot cheaper, Azure more competitive, and OpenAI’s models more accessible. The silicon decisions directly shape what products can exist and at what price.

Vertical integration is winning. Steve Jobs said, “People who are serious about software should make their own hardware.” Apple proved it with M-series chips. Now every hyperscaler is proving it with AI accelerators. The companies that control the full stack, from silicon to software to service, will have structural cost advantages that pure software companies can’t match.

The real battle is compilers, not chips. Whoever builds the best abstraction layer that makes hardware interchangeable wins the developer ecosystem. Chips are expensive and slow to iterate. Software moves fast. The compiler layer is where the next CUDA-level power will be built.

Edge and cloud will coexist, not compete. Your phone handles the quick, private, latency-sensitive stuff. The cloud handles the heavy reasoning that needs trillion-parameter models. The interesting product opportunities live at the boundary, when does a query stay local and when does it escalate to the cloud?

We’re living through a moment where the entire computing stack is being redesigned from the transistor up. Not incrementally but fundamentally. New chips, new architectures, new compilers, new deployment models. The last time this happened was the PC revolution, and before that, the mainframe-to-minicomputer transition.

The next generation of products, the ones that will actually matter, won’t be built by people who only understand the app layer. They’ll be built by people who understand why Microsoft designed Maia 200, why CUDA’s power is both unbreakable and slowly eroding, why Qualcomm’s edge play might be the most underrated bet in tech, and why the compiler problem is the real trillion-dollar opportunity hiding in plain sight.

The kitchens are being rebuilt. And the chefs who understand the new equipment? They’re going to cook some incredible things.

If you made it this far, first of all, respect. Second, I’m genuinely curious what you think. Are we heading toward hardware fragmentation forever, or will the industry eventually standardize? Drop your thoughts, argue with me, tell me I’m wrong. That’s how the pattern emerges.